When a table is copied or exported, the plugin needs to read and write all table rows. For large tables this might result in a fatal error:

Fatal error: Allowed memory size of 268435456 bytes exhausted (tried to allocate 4096 bytes)

One way to solve this issue is to increase WP_MAX_MEMORY_LIMIT. But no matter how high you set this value, tables can grow bigger.

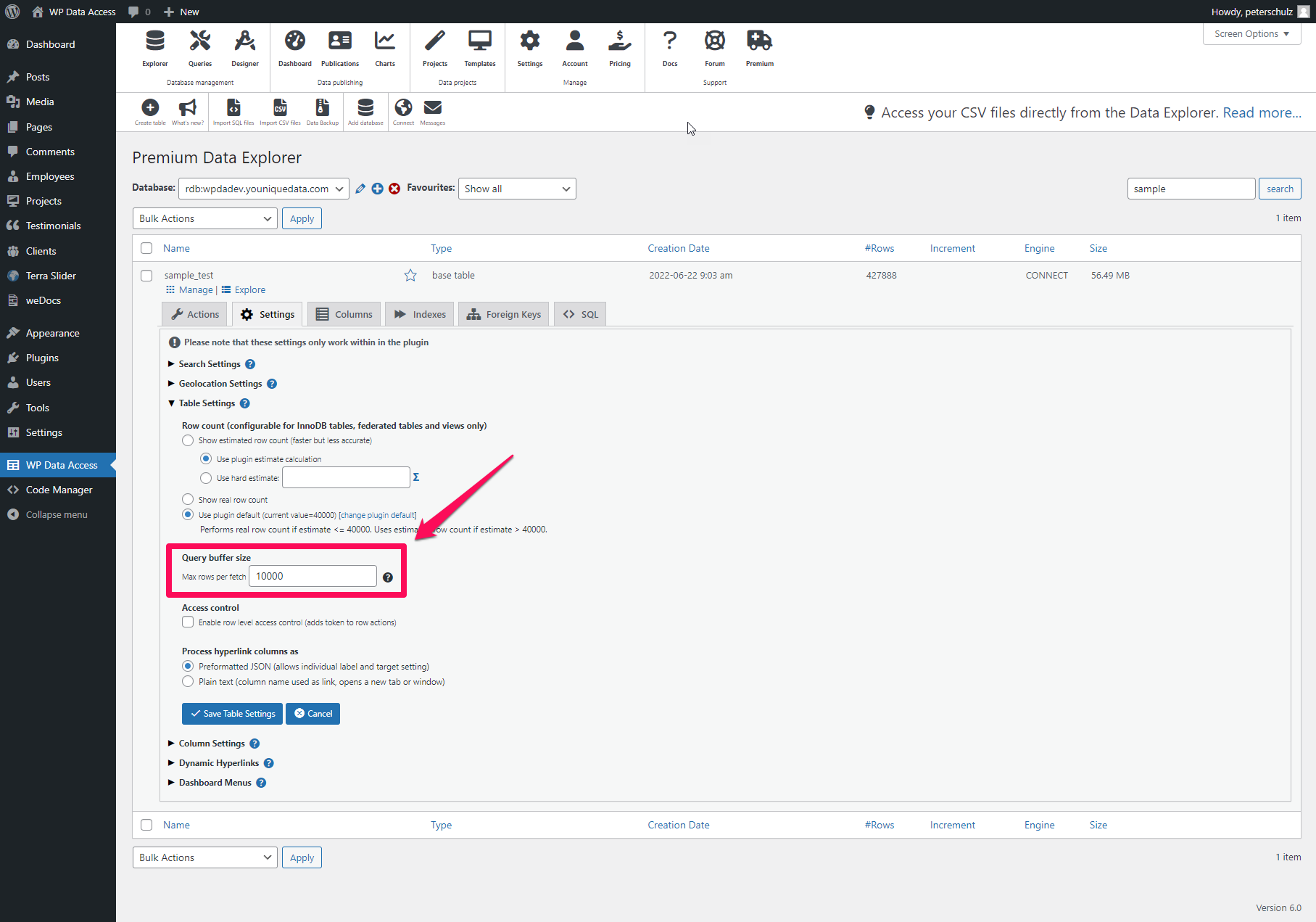

To solve this issue and allow the plugin to read and write rows in batches the query buffer size was introduced in version 5.2. The query buffer size can be defined per table from the Data Explorer > Settings > Table Settings (see image below – click to enlarge).

This value is empty by default, which leads to a full table read and write. Enter a sufficient value if you experience issues when copying or exporting large tables. A higher value increases the change of a fatal error. A lower value might increase execution time. There is no one size fits all. It depends on the amount of storage used per table row.

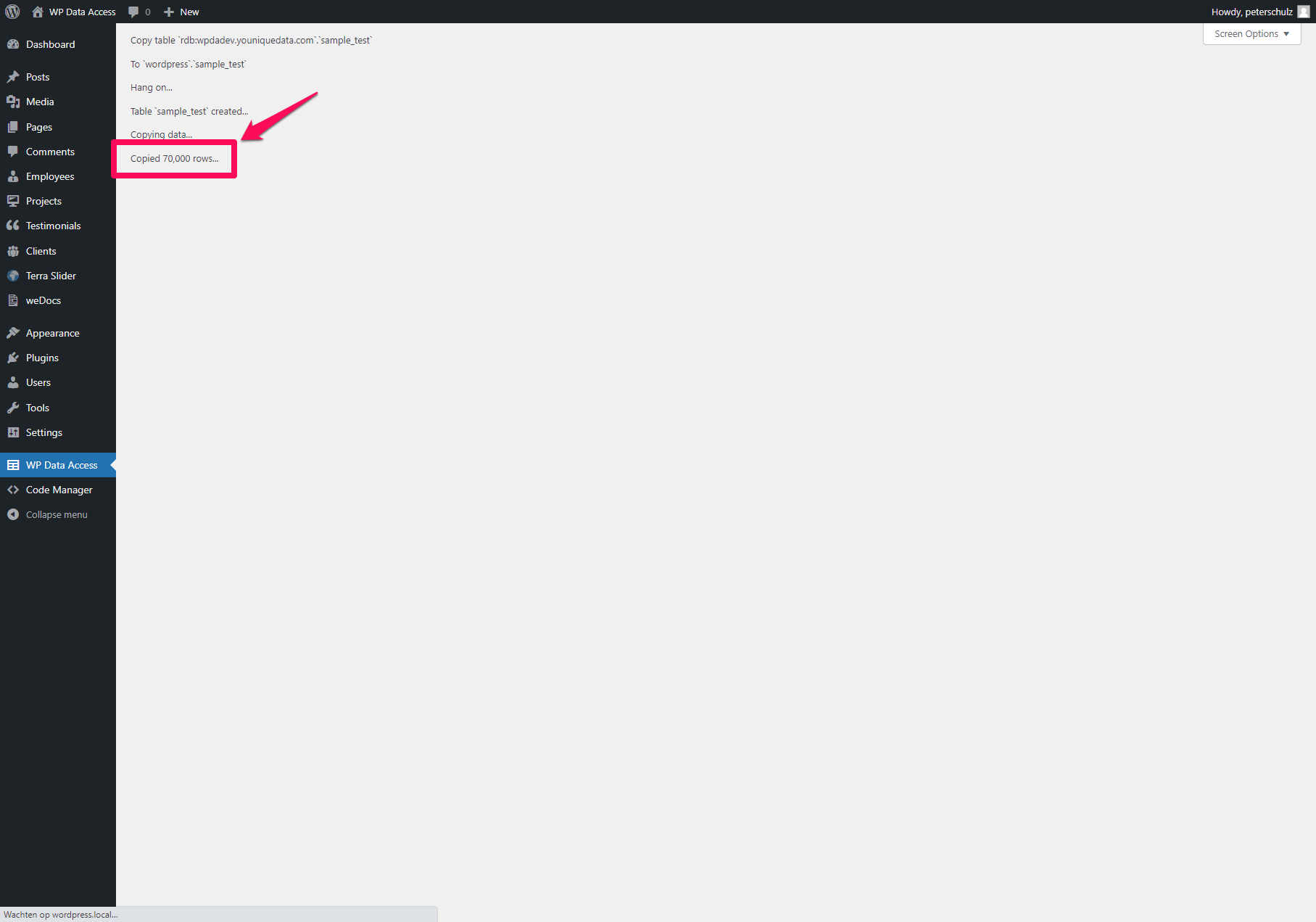

When the query buffer size is defined, the copy table user interface looks different. A page is presented showing the copy process (see image below – click to enlarge). The number of rows copied will be updated each time a buffer was processed.

Notes

- Do not close your browser or tab while a copy table or table export is in progress. This is will terminate the process.

- You can cancel a copy table or export job any time. Data already copied or exported is not deleted automatically.

- My largest test was a table containing more then 12.5 million rows. My laptop needed about 5 hours to copy all rows. It worked like a charm. 😉

0 Comments